An Introduction to AI for Investigation: Theory and Practice

The following is an edited, Claude-generated summary of a Whisper-generated transcript of a guest lecture I gave at Berkeley's Human Rights Center on March 4, 2025.

I was excited to demonstrate the transformative potential of artificial intelligence in investigation, knowing how limited AI's use is in the field.

The goal was to spark imagination about what AI could do, rather than just what it is doing right now.

We kicked off with the basics: simple regression models that have exposed US spyplane activity and shone a light on LA police crime misclassification. Next, we dove into visual techniques using convolutional neural networks, which played a major role in identifying bombs dropped on Gaza. Finally, we delved into embedding models and Large Language Models, demonstrating how they can help researchers comb through huge documents and perform reverse image searches.

We wrapped up by discussing AI models' efficacy and ethics, and the concept of hallucinations.

AI products—from fintech to healthcare, let alone investigation—are still in their infancy, and I'm super excited to help push them forward.

What Is (and Isn't) AI?

We began by establishing a fundamental distinction between AI and deterministic programming.

What AI isn't: AI is not automation or traditional deterministic programming, where a computer follows explicit instructions to produce a guaranteed outcome.

What AI is: AI is a mechanism that learns patterns from data rather than following a fixed set of instructions. AI generalizes from examples.

Three Categories of AI for Investigation

1. Pattern Recognition with Machine Learning

I shared how even simple machine learning algorithms can yield powerful investigative results. I described some theory—the canonical example of house price data—and then went into practice.

- Finding Spy Planes (BuzzFeed): Peter Aldhous collected data on known spy plane characteristics—turning rates, speeds, altitudes—and trained a model to find other spy planes in public flight data from FlightRadar24. This approach took government surveillance that was hiding in plain sight and made it visible.

- Reclassifying Crimes (LA Times): Ben Poston and Joel Rubin used machine learning to show that the LAPD had systematically misclassified violent crimes as minor offenses. Their model identified patterns in crime reports that revealed the true nature of incidents, holding the department accountable.

These examples demonstrate that you don't need extremely complex neural networks to uncover significant findings. Often, basic pattern recognition with carefully-chosen features can reveal powerful insights.

2. Deep Learning for Visual Analysis

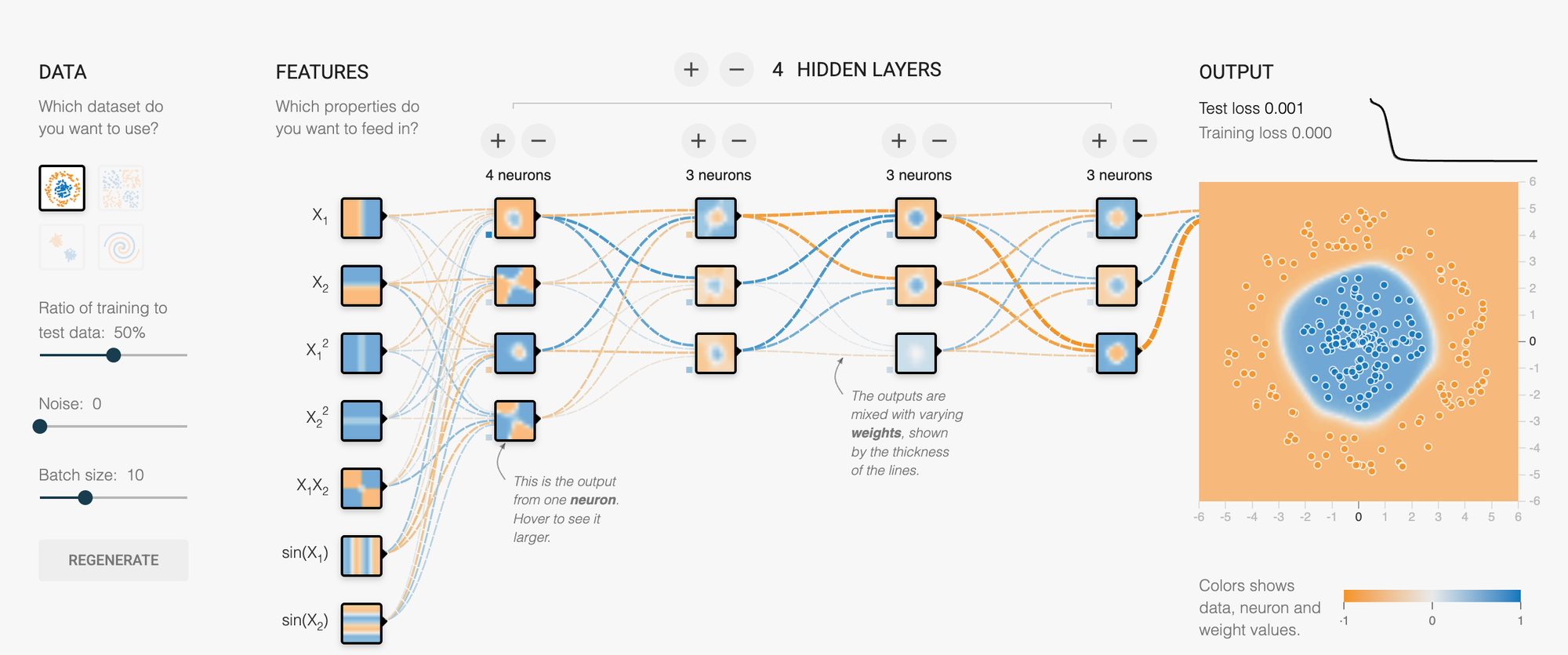

We then moved onto more complex models based on neural networks, which I demonstrated through TensorFlow Playground, showing how even a 20-parameter network can learn to classify complex patterns. For investigative work, these techniques enable the processing of vast amounts of data that would be impossible to analyze manually.

- Bomb Crater Identification (New York Times): Journalists trained deep learning models through Picterra to identify 2,000-pound bomb craters in Gaza in areas where Israel had told civilians they would be safe. The investigation found over 200 such craters, providing evidence of military actions affecting civilian safety. One person noted how quickly the process worked once properly trained—just 45 minutes to identify all the bomb craters across the imagery.

- Cluster Munition Detection: Researchers used synthetic data generation to overcome limited training examples. By taking photos of cluster munitions and synthetically creating variations with different shadows, breaks, and distortions, they built a robust training set that could identify these weapons in conflict zones.

3. Transformer Models and Language Processing

The evolution from recurrent neural networks to transformers (introduced in "Attention Is All You Need") revolutionized natural language processing:

- Document Analysis and Translation: I demonstrated a tool I built for parsing documents in foreign languages, which was used for analyzing Portuguese and Persian documents. This tool combines multiple AI models—document intelligence models to recognize document structure and transformer-based translation models—creating much more accurate results than older OCR methods.

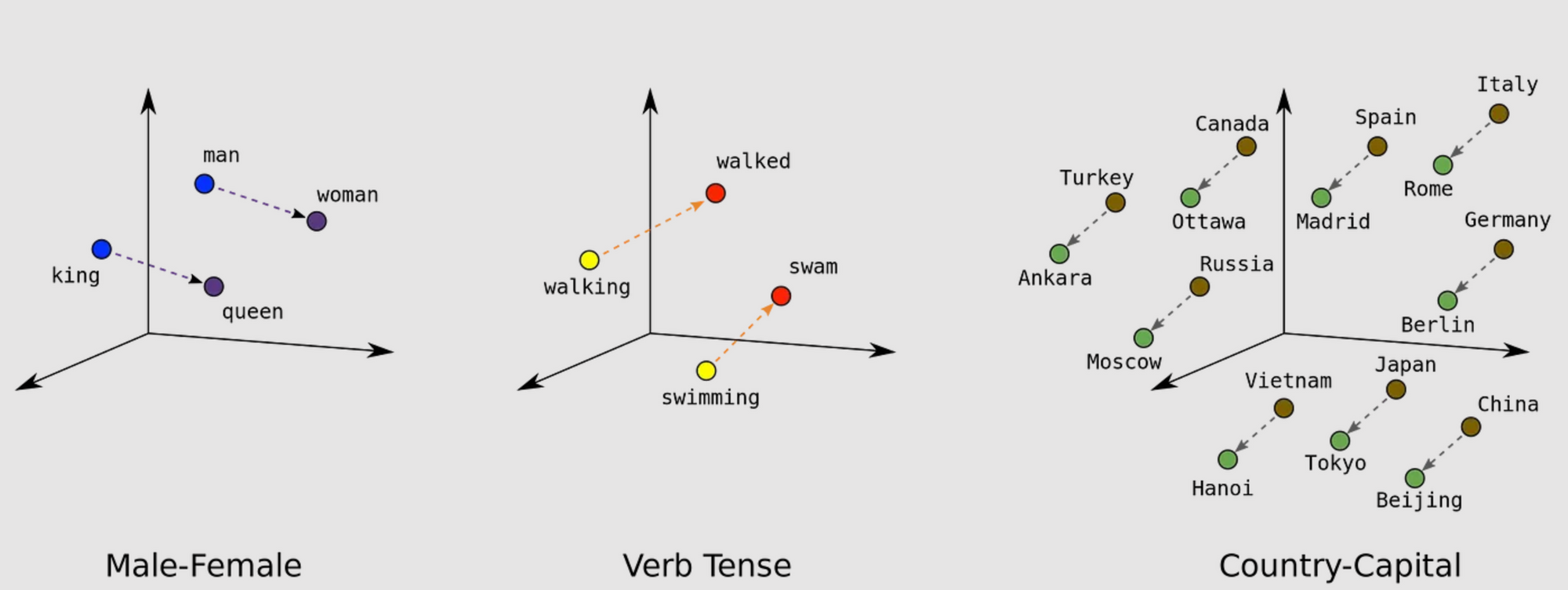

- Embedding Models: I explained how transformer models create vector representations where semantically similar concepts cluster together. I used the linguist J.R. Firth's famous quote: "You shall know a word by the company it keeps," to illustrate how these models detect meaningful relationships between words, creating fascinating mathematical properties (like how the embeddings for "king" - "man" + "woman" = "queen").

- Reverse Image Search: These embedding techniques also power visual search technologies that can help verify the provenance of images—critical for fact-checking and geolocating in human rights investigations.

- Transcription: OpenAI's Whisper, an open-source transcription model, can accurately process audio in multiple languages. This is invaluable for analyzing radio transmissions, videos, and other audio evidence in investigations.

Large Language Models: Power and Pitfalls

We had a lively discussion about ChatGPT, Claude, and similar models. Students shared their experiences using these tools for various tasks:

- Email composition and tone adjustments.

- Generating complex search queries.

- Finding potential email addresses for hard-to-reach sources.

- Summarizing content and extracting text from photos.

- Explaining complex topics, "like I'm five years old."

This led to an important discussion about the term "hallucination" and what's actually happening when LLMs generate incorrect information. I brought up Andrej Karpathy's line about every output being a hallucination. This is critical distinction for anyone using LLMs to understand.

I demonstrated powerful applications of LLMs in investigative contexts:

- Retrieval Augmented Generation (RAG): Combining embedding models, knowledge graphs, BM25 searches, etc., with LLMs to answer questions about specific documents by searching for the most relevant elements, reducing hallucinations by grounding responses in source material.

- Structured data extraction: Using LLMs to convert unstructured documents like invoices into structured JSON data that can be analyzed programmatically—a game-changer for processing large document sets.

Ethics and Limitations

We explored several crucial concerns about AI in investigations:

- Data Privacy: Should organizations send sensitive investigative material to commercial AI providers? I discussed alternatives like using private endpoints through Microsoft Azure, Google Cloud, or AWS—or running models locally. (See Ollama and MLX.)

- Explainability: Most advanced models function as black boxes. While the field of AI interpretability exists, it hasn't yet solved this fundamental challenge. This could post a problem for auditability.

- Dependency Risk: The danger of investigators becoming overly reliant on AI tools, especially when those tools might contain biases or errors.

- Human Verification: The critical importance of having human experts verify AI-generated insights, particularly given the "hallucination" problem.

One student raised an important question about challenging AI outputs when you aren't in control of the inputs, citing UnitedHealth's use of AI for claim approvals/denials. This led to a discussion about algorithmic auditing and the challenges of holding black-box systems accountable.

Practical Tools for Investigators

I organized AI tools on a spectrum from technical complexity to ease of use:

More Technical:

- Writing code yourself, perhaps with the help of LLMs

- Hugging Face (with special mention of their journalism unit)

- OpenAI's Whisper for transcription

- Azure AI services (Vision Studio, Document Intelligence Studio)

- Datasaur for understanding RAG and different LLMs.

- Ollama and MLX.

Middle Ground:

- Bellingcat Toolkit

- Datasette (by Simon Willison) for technical work with (and without) AI

- Picterra (used by NYT for the bomb crater investigation)

More Accessible:

- ChatGPT/Claude/Gemini

- Google Pinpoint (though some students noted limitations)

- GeoSpy for image geolocation

I emphasized that you don't necessarily need the latest models for many investigative tasks, and that sometimes simpler approaches yield better results. But, you'll always have more flexibility and control the further down the technical stack you go.

Learning Resources

I shared resources for those wanting to learn more:

Technical Resources:

- Deeplearning.AI (Andrew Ng's courses)

- Stanford AI Courses

- Andrej Karpathy's videos (particularly good for understanding LLMs)

- Simon Willison's blog

Investigation-Focused Resources:

- investigate.ai

- AI for Data Journalism

- Craig Silverman's newsletter

- Henk van Ess' newsletter

- Nico Dekens' blog

Real-World Applications

Throughout the session, students asked insightful questions about applying these techniques to their own investigations. (Removed for privacy and security concerns.)

Conclusion

By understanding the strengths and limitations of different AI approaches, investigators can choose the right tool for each task—whether it's simple machine learning to find patterns in data, computer vision to analyze images at scale, or LLMs to process and structure documents. The technology is evolving rapidly, but its true impact comes from thoughtful application by skilled investigators who understand both what it can and cannot do.